A squad of soldiers are on the hunt. Their prey: a killer robot, sent by the enemy to attack their position. But after following what seems to be a trail left by the device, the robot is nowhere to be found. It was a trick, and the killer robot is still at large.

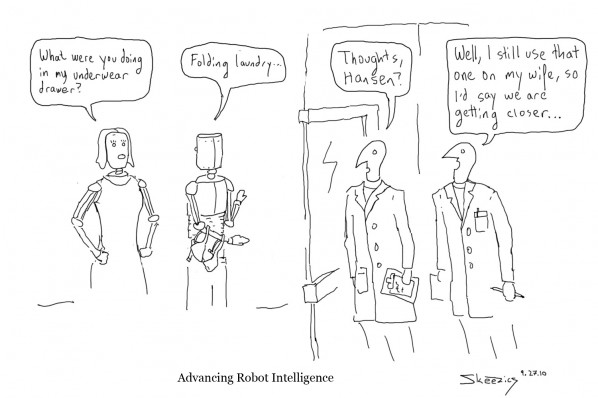

Such a scenario seems straight out of a science-fiction movie, but researchers at the Georgia Institute of Technology believe it could become reality, after claiming to have programmed robots to deceive. The study, which was published in the International Journal of Social Robotics on September 3rd, used a group of robots which tried to hide from a “seeker” robot by creating false trails to fool the seeker into looking the wrong way. The robots were taught to deduce if a situation required deception and how to use it.

The authors of the study, researchers Alan R. Wagner and Ronald C. Atkins, saw the development as an important step towards creating better, smarter, robots.

“For many social robotics and multi-robotics application areas, the use of deception by a robot may be rarely used, but is nonetheless an important tool in the robot’s interactive arsenal,” the pair say in the study. The study also claims that the use of deception could easily by applied to social or even military robotics, including robots with the ability to kill.

In a previous book written by Atkins, Governing Lethal Behavior in Autonomous Robots, he claimed that robots with the power to choose when to kill may may be more empathetic during battle.

Robots, he says, “can potentially perform more ethically in the battlefield than humans are capable of doing.”

Atkins argues that giving robots lethal capacity is a necessary step in reducing violence by eliminating human emotion and error. But what happens when those robots are able to lie and deceive? Are we on the verge of a robot uprising, where killer robots can choose to lie to humans?

Not even close, said Ryan Hunter (ENG ’11), President of the BU Unmanned Aerial Vehicle Club and CEO of aerial surveillance company TR Aeronautics. Hunter explained that current technological limits prevent any robot from being able to process information as fast as a human, making self-awareness a near impossibility.

“The human brain has an estimated processing power of about 1 Petaflop,” he said. That’s approximately the same power generated by 1,000 laptops running simultaneously. Only certain large, expensive supercomputers can reach that kind of speed today. And without that kind of speed, a robot would be unable to think for itself. Thus, if a robot cannot think for itself, Hunter said, a robot cannot lie.

“The concept of lying requires self-awareness,” he said. “The thing it comes down to is ‘what does it mean to be self-aware’? The honest answer is we don’t know.” Until robots do reach the stage of self-consciousness, he said, there’s little fear of them choosing to lie to humans for their own purposes.

As for the study, Hunter believes that claiming to teach a robot to lie might be a stretch. “It’s simulated lying,” he said. “The robot doesn’t

know it’s lying, it’s just doing something that appears to be lying.”

So fear not. “There’ll be no ‘Sonny’ robots running around,” Hunter said, referring to the rogue killer robot in the 2004 sci-fi flick I, Robot.

Instead, Hunter thinks the next wave of military technology will be what he calls “semi-autonomous” robots, such as the Predator drone currently used by the CIA, which can carry out missions and instructions independently but still needs a human operator to oversee the missions and step in if something goes wrong.

In place of one human operator controlling one robot, Hunter thinks we may see systems that become independent enough so that one man can control an array of robots in the field at once. “You can have a guy controlling 20 of these vehicles.”

Autonomous robots are not outside the range of possibility, though they could only be designed to carry out specific kinds of missions. Hunter is currently working on such a robot: a device that can be launched into the sky by a squad under fire that would provide long-term surveillance by circling around the squad leader on its own. Still, self-aware robots are probably still far down the pipeline.

“You never say never,” he said. “There’s a lot of guys who said ‘never’ and got burned. But I’d say it’s a still a ways off.”

[poll id=”2″]

One Comment on “Robopocalypse? Not Really”