4-Player is a new, weekly video game column examining gaming culture on campus and online, documenting a previously unrepresented segment of BU’s culture. 4-Player is co-written by Jon Christianson, Ashley Hansberry, Allan Lasser, and Burk Smyth.

Whenever I think of video game music, I hear the opening bars of the Super Mario Bros. theme play in my head. Do do do doot do doot doot. It’s been almost thirty years since the original Super Mario Bros., and video games have grown up since then. The graphics, rules, stories have complicated and their music has followed the trend. Today’s video games integrate their music so tightly that as players interact within the game’s ruled boundaries, the soundtrack mutates under the influence of their decisions.

While a mention of video game music might bring up memories of Dance Dance Revolution, Guitar Hero, and Elite Beat Agents, that’s not what I’m talking about. In those games, Norbert Herber (Department of Telecommunications, Indiana University at Bloomington) says the music “operates in a binary, linear mode” and fails to “recognize the emergence, or becoming, that one experiences in the course of an interactive exchange. A traditional, narrative compositional approach leaves no room for the potential of a becoming of music.” Put another way, those experiences lead the player along without exploiting any of the interactivity inherent to the medium. The goal of those games is playing along to music and matching patterns that are rigidly defined from the get-go.

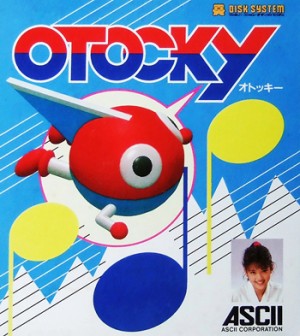

Instead, what I am talking about are games that allow players to inhabit elaborate, interactive ecosystems. Within games like Otocky, Portal 2, the most recent SimCity and Spore, soundtracks become the byproducts of a player’s actions and situations. The musical mechanics of these games evoke Herber’s concept of a composition-instrument, that is “a conceptual framework that helps facilitate the creation of musical systems for interactive media, art, and telematic environments.” A less pretentious way of describing it is as an interactive work that incorporates the very music it generates. While Herber blankets his ambiguous term under its classification as an even vaguer “conceptual framework,” the composition-instrument provides a helpful reference for the idea of a work that never finished and always feeding back into itself. These games work as composition-instruments and range a full spectrum of musical complexity, from the low-resolution music generated by a Famicom title all the way to the complex, procedurally generated music of a modern simulation game.

The first video game to exhibit generative music was a game for the Famicom, the Japanese version of the Nintendo Entertainment System. The game was called Otocky and was developed by Toshio Iwai, a pioneer of video game music who would later go on to create SimTunes and Electroplankton. Instead of a soundtrack, Otocky is remembered by Bruno de Figueiredo as having “a predetermined beat or bass loop that plays as aural background.” The player’s button presses generate tones with pitches determined by which of the eight directions the player pressed on the control pad. de Figueiredo says that, “Consequentially, the game presents a scenario where the music is not entirely pre-conceived as it is, in fact, created by the player in result of the actions he performs during the game.” By matching the pitch and phase of actions to the percussive foundation, the game provides a mechanism for players to forgo their spastic trigger instinct for a more intuitive, musical one. By removing any cues and forcing the players to invent the game’s soundtrack, musical talent translates directly into gaming talent.

The music of Valve’s 2011 blockbuster Portal 2, while higher-fidelity, resembles the implementation of player-generated music in Otocky. The game’s composer, Mike Morasky, explained how as the mechanics of different devices are layered to solve one of Portal 2‘s puzzles, each device contributes a musical layer to the game’s score, as well. He says that this, “turns the mechanics of the puzzle into a sort of interactive music instrument that you can explore by selectively triggering the different channels of music with differing timings and configurations.” Each element within a puzzle is given a memorable musical identity, cueing players to permute these different elements during their hunt for a solution. Morasky also explains that the music is modified by the player’s orientation in space, encouraging the thorough exploration of the environment for clues. Like in Otocky, Portal 2’s music is not ornamental but strategic: it is used to reduce “puzzle fatigue“ and encourages a sense of fun even when confronted by a frustrating and seemingly insurmountable obstacle.

In an effort to describe a multifaceted interactive space through sound, SimCity composer Chris Tilton relied on the same style of combinative soundtrack as heard in Portal 2. In an interview with The Verge, Tilton said that he:

Wrote each piece of music in several different layers depending on which kind of mode you’re in. So if you’re zoomed out in the region view you’ll hear a lot more orchestral stuff… as you get a middle-of-the-road view, some of the guitar elements and simpler things start to come in. And then when you get really close and you’re zoomed in, you hear more sounds in the city and a lot less music.

Aside from representing scales, the music also changes with the attitude of the game as it shifts from constructive build screens to analytic statistical displays. Furthermore, the tempo is synchronized with the game’s simulation quanta (or number of “ticks” per second), providing the animations and virtual objects with a sense of weight and motion. SimCity takes some of the musical agency away from the player, but it remains a composition instrument because the game’s music is determined by the decisions players make during the game. Music is composed by the game, but according to rules that the players invoke.

The developer of the original SimCity, Will Wright, intensely applied procedural generation to the creation of music in his 2008 video game Spore. Almost a decade ago, Will Wright gave a remarkable talk at the 2005 Game Developers Conference about procedural generation in video games, and part of his lecture involved a demonstration of Spore, which was still early in its development. Spore was originally nicknamed SimEverything, and the simulator Wright demonstrated wasn’t just ambitious—it was unbelievable. When given the physical form of an imaginary creature—with any number or combination of arms, legs, eyes, mouths, and horns—Spore could procedurally generate the animal’s animations and attributes. With this technology, Spore simulates entirely imaginary worlds and the entire end phase of the game involves the exploration of a procedurally generated galaxy.

The music in Spore, following the spirit of the game, is all procedurally generated as well. Wright hired Brian Eno to help create Spore‘s score. Eno is a pioneer of ambient, generative music and he describes it as, “a sort of steady state condition that you entered, stayed in for awhile, and then left… So it doesn’t have beginning, it doesn’t have development, it doesn’t have climaxes.” For the game, Eno created the rules that generated the music rather than the music itself. He describes his work as designing “seeds, rather than forests” for the finished game.

Herber recounts that Eno, “looks at works like In C, or anything where the composer makes no top-down directions, as precursors to generative music. In these works detailed directions are not provided. Instead there is ‘a set of conditions by which something will come into existence.'” The music in Spore, in fact the entirety of the game itself, is a composition instrument. But players may not recognize this, since they have no direct hand in the music themselves. Instead, through creating a unique life-form the player provides the raw rules for the music’s generation. As the player’s creature evolves, the music mutates along with it. The music does not narrowly define the experience of the game, but it allows the vast possibilities accommodated by the interactivity of video games to describe the boundaries of the music. Everything generated within that bounded possibility space is not only unique, but more attributable to the player rather than Eno or the game itself.

Video games are a young medium. Developers are still just coming to grasp the idiosyncrasies of video games when compared to other modes of expression. Among these, developers are only beginning to accommodate expansive, non-linear experiences over tightly controlled linear narratives. The uncomposed music of games is the first reflection of their status as composition-instruments and objects of genuine interactivity. Rather than bemoan the destruction of architected musical compositions by algorithms, players and composers should both be welcoming the potential for rich, surprising, and personal experiences with the music of games.

2 Comments on “4-Player: “Playing” Music In Generative Video Games”